The Model Isn’t the Hard Part

By 2026, there will be an estimated 750 million apps using LLMs. The model market is crowded, noisy, and changing quarterly. GPT-4o, Claude, Gemini, Llama, Mistral, DeepSeek. New names every month.

Here’s what we tell clients: the model choice matters less than you think. The hard part is the architecture around it. Data pipelines, integration, prompt engineering, monitoring, compliance.

Still, you need to pick one. Here’s how to think about it.

The Three Categories

The LLM market breaks into three buckets. Each has different trade-offs for business use.

Proprietary cloud models (GPT-4o, Claude, Gemini) offer the highest capability with zero infrastructure management. You pay per API call. Your data goes to their servers.

Open-source models (Llama 3, Mistral, DeepSeek V3) give you full control. You host them yourself. No per-query costs, but significant infrastructure investment.

Managed open-source (through providers like AWS Bedrock, Azure ML, or Replicate) splits the difference. Open-source models hosted by cloud providers. Easier than self-hosting, cheaper than proprietary APIs.

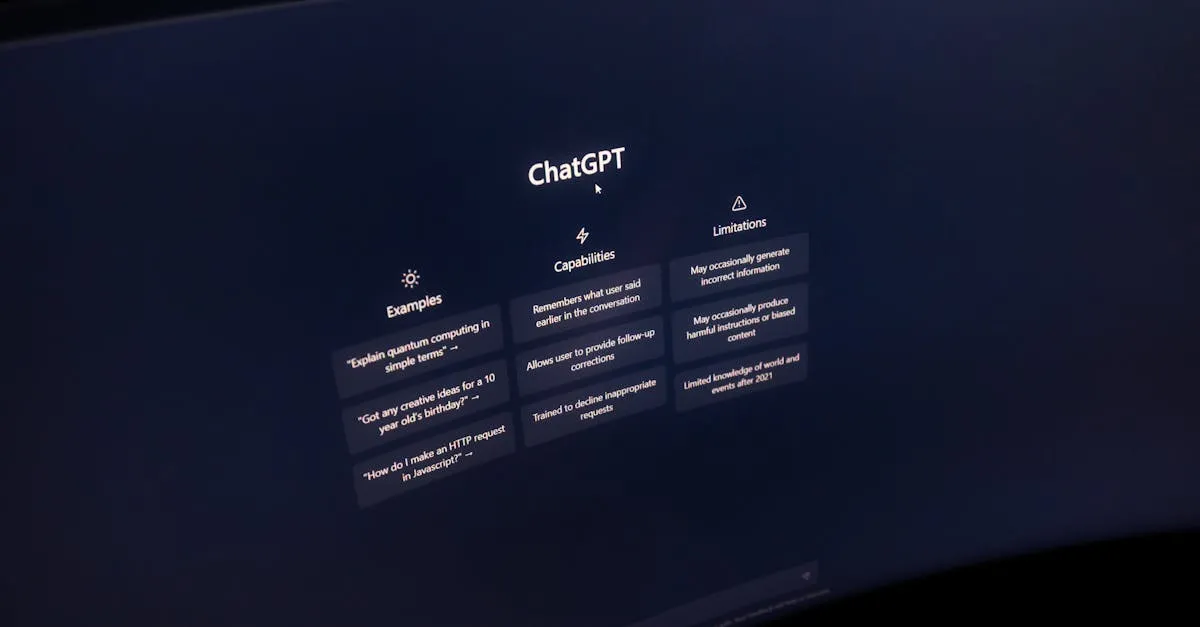

GPT-4o: The Default Choice

OpenAI’s GPT-4o is the market leader. Strongest general-purpose performance. Best coding capabilities. Widest ecosystem of tools and integrations.

Pricing: roughly $2.50 per million input tokens, $10 per million output tokens. For a typical business application processing 10,000 queries per day, that’s approximately EUR 300-800/month.

Where it wins: complex reasoning tasks, code generation, multimodal applications (processing images and text together). The broadest capability set of any model.

Where it falls short: data privacy concerns for European companies, potential vendor lock-in, higher cost than alternatives for simpler tasks.

Claude: The Careful Choice

Anthropic’s Claude excels at long-form reasoning, document analysis, and tasks where reliability matters more than creativity. It’s trained with constitutional AI, which means more predictable behavior.

Pricing: comparable to GPT-4o. Roughly $3 per million input tokens, $15 per million output tokens for Claude 3.5 Sonnet. Cheaper options exist for simpler tasks.

Where it wins: document summarization, legal and compliance text, long context windows (up to 200K tokens), and applications where safety and alignment are priorities.

Where it falls short: slightly behind GPT-4o on certain coding benchmarks, smaller ecosystem of third-party tools and integrations.

Open Source: The Control Choice

Llama 3 (Meta), Mistral, and DeepSeek V3 compete with proprietary models on many benchmarks. DeepSeek V3 scores within a few percentage points of GPT-4o on coding tasks at a fraction of the cost.

The economics are dramatic. Self-hosted open-source models eliminate per-query API costs entirely. You pay for compute infrastructure: roughly EUR 500-2,000/month for a capable GPU setup.

Where it wins: data sovereignty (everything stays on your servers), no vendor dependency, dramatically lower cost at high volume, and full control over model behavior.

Where it falls short: higher operational burden (you manage the infrastructure), potentially slower iteration on model improvements, and smaller support community for enterprise use cases.

For European companies with GDPR concerns, open source on-premise deserves serious consideration. We break this down in our guide on privacy-first AI for European companies.

How to Choose

Don’t start with the model. Start with the use case.

For document processing and extraction, any of the major models work. Choose based on cost and data sensitivity.

For customer support triage, GPT-4o or Claude paired with RAG deliver the best results. The retrieval system matters more than the generation model.

For internal knowledge search, Claude’s long context window is an advantage. But open-source models with proper RAG architecture close the gap.

For regulated industries processing sensitive data, open-source on-premise is often the only option that satisfies compliance requirements.

Most companies don’t need to commit to one model permanently. Build your architecture model-agnostic from the start. Use an abstraction layer that lets you swap models as the market evolves.

The Pricing Trap

LLM pricing changes quarterly. GPT-4 launched at $30 per million tokens. Two years later, GPT-4o costs a third of that. Prices only go down.

Don’t over-optimize for today’s pricing. The model that costs 20% more now but fits your security requirements is the better long-term choice.

What actually drives cost: not the per-token price but the number of tokens per request. Poorly designed prompts that send 10,000 tokens when 2,000 would suffice cost 5x more regardless of which model you use.

Prompt engineering saves more money than model shopping. Invest there first.

Our Recommendation

For most SMBs starting their first AI project, use GPT-4o or Claude via API. Fastest time to production. Lowest infrastructure burden. Well-documented. Easy to find developers who know them.

If data stays on their servers concerns you, evaluate open-source on-premise. The quality gap has closed significantly.

Don’t spend months evaluating models. Pick one, build a pilot, measure results. You can always switch later if your architecture is designed for it.

For the broader picture on integrating AI into your workflows, read our AI workflow integration guide. And if cost is your primary concern, our AI integration cost guide has the full breakdown.

Need help picking the right model for your use case? Let’s evaluate it together. We’ll assess your requirements, data sensitivity, and budget and recommend the best fit.